News & features

AsgardBench: A benchmark for visually grounded interactive planning

| Andrea Tupini, Lars Liden, Reuben Tan, Yu Wang, and Jianfeng Gao

Imagine a robot tasked with cleaning a kitchen. It needs to observe its environment, decide what to do, and adjust when things don’t go as expected, for example, when the mug it was tasked to wash is already clean, or…

GroundedPlanBench: Spatially grounded long-horizon task planning for robot manipulation

| Sehun Jung, HyunJee Song, Dong-Hee Kim, Reuben Tan, Jianfeng Gao, Yong Jae Lee, and Donghyun Kim

Vision-language models (VLMs) use images and text to plan robot actions, but they still struggle to decide what actions to take and where to take them. Most systems split these decisions into two steps: a VLM generates a plan in…

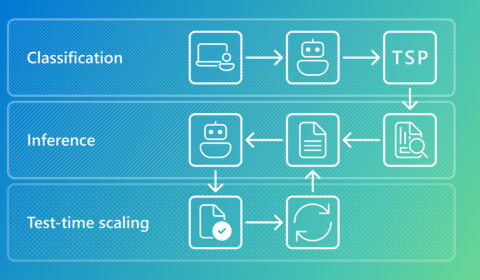

Systematic debugging for AI agents: Introducing the AgentRx framework

| Shraddha Barke, Arnav Goyal, Alind Khare, and Chetan Bansal

As AI agents transition from simple chatbots to autonomous systems capable of managing cloud incidents, navigating complex web interfaces, and executing multi-step API workflows, a new challenge has emerged: transparency. When a human makes a mistake, we can usually trace…

In the news | National Academy of Engineering

Doug Burger elected to National Academy of Engineering

Academy membership honors individuals who have made outstanding contributions to engineering research, practice, or education. Burger was elected for accelerating cloud-scale computing and networking infrastructures with field-programmable systems.

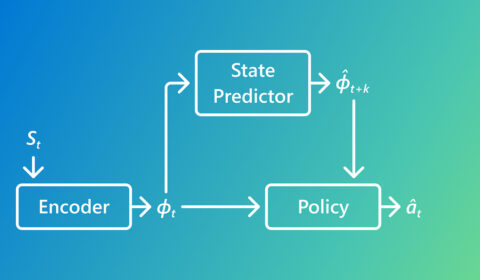

Rethinking imitation learning with Predictive Inverse Dynamics Models

| Pallavi Choudhury, Lukas Schäfer, Chris Lovett, Katja Hofmann, and Sergio Valcarcel Macua

This research looks at why Predictive Inverse Dynamics Models often outperform standard Behavior Cloning in imitation learning. By using simple predictions of what happens next, PIDMs reduce ambiguity and learn from far fewer demonstrations.

UniRG: Scaling medical imaging report generation with multimodal reinforcement learning

| Sheng Zhang, Flora Liu, Guanghui Qin, Mu Wei, and Hoifung Poon

AI can help generate medical image reports, but today’s models struggle with varying reporting schemes. Learn how UniRG uses reinforcement learning to boost performance of medical vision-language models.

In the news | Association for Computing Machinery

Madanlal Musuvathi named ACM Fellow

Madanlal was selected by his peers for the development of methods in concurrency verification and testing, and machine learning systems design.

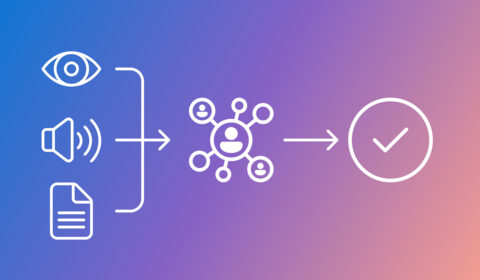

Argos: Multimodal reinforcement learning with agentic verifier for AI agents

| Reuben Tan, Baolin Peng, Zhengyuan Yang, Oier Mees, and Jianfeng Gao

Argos improves multimodal RL by evaluating whether an agent’s reasoning aligns with what it observes over time. The approach reduces visual hallucinations and produces more reliable, data-efficient agents for real-world applications.

OptiMind: A small language model with optimization expertise

| Xinzhi Zhang, Zeyi Chen, Humishka Hope, Hugo Barbalho, Konstantina Mellou, Marco Molinaro, Janardhan (Jana) Kulkarni, Ishai Menache, and Sirui Li

OptiMind is a small language model that converts business operation challenges, described naturally, into mathematical formulations that optimization software can solve. It reduces formulation time & errors & enables fast, privacy-preserving local use.