STEAM: Observability-Preserving Trace Sampling

Sarathi-Serve

Sarathi-Serve (a research prototype) is a high throughput and low-latency LLM serving framework. This repository contains a benchmark suite for evaluating LLM performance from a systems point of view. It contains various workloads and scheduling…

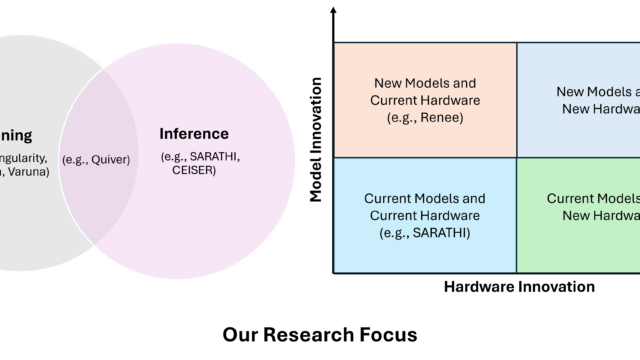

Research Focus: Week of November 22, 2023

A new deep-learning compiler for dynamic sparsity; Tongue Tap could make tongue gestures viable for VR/AR headsets; Ranking LLM-Generated Loop Invariants for Program Verification; Assessing the limits of zero-shot foundation models in single-cell biology.

VIDUR: LLM Simulator

Vidur is a high-fidelity and extensible LLM inference simulator. It can help you with capacity planning and finding the best deployment configuration for your LLM deployments, test new research ideas like new scheduling algorithms, optimizations…