Interactive Multimodal Futures (IMF)

Interactive Multimodal Futures focuses on creating interactive systems and experiences that blend the richness and complexity of people and their real, physical world with advanced technology. We seek to leverage multimodal generative AI models that…

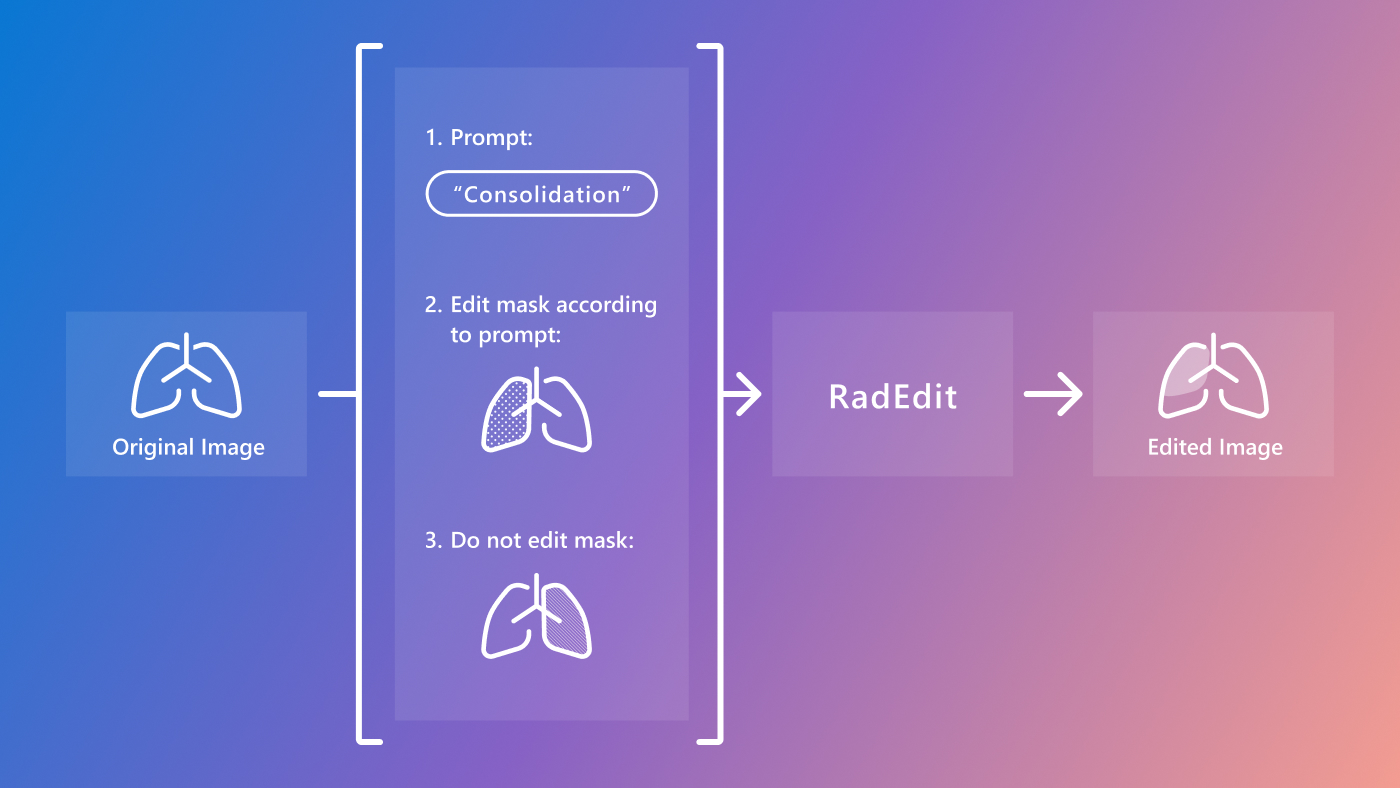

Stress-testing biomedical vision models with RadEdit: A synthetic data approach for robust model deployment

RadEdit stress-tests biomedical vision models by simulating dataset shifts through precise image editing. It uses diffusion models to create realistic, synthetic datasets, helping to identify model weaknesses and evaluate robustness.

Abstracts: September 30, 2024

The personalizable object recognizer Find My Things was recently recognized for accessible design. Researcher Daniela Massiceti and software development engineer Martin Grayson talk about the research project’s origins and the tech advances making it possible.