ニュース&特集

µTransfer: A technique for hyperparameter tuning of enormous neural networks

| Edward Hu, Greg Yang, と Jianfeng Gao

Great scientific achievements cannot be …

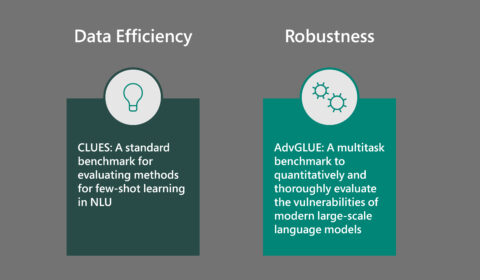

You get what you measure: New NLU benchmarks for few-shot learning and robustness evaluation

| Jianfeng Gao と Ahmed Awadallah

Recent progress in natural language unde…

Efficiently and effectively scaling up language model pretraining for best language representation model on GLUE and SuperGLUE

| Jianfeng Gao と Saurabh Tiwary

As part of Microsoft AI at Scale (opens …

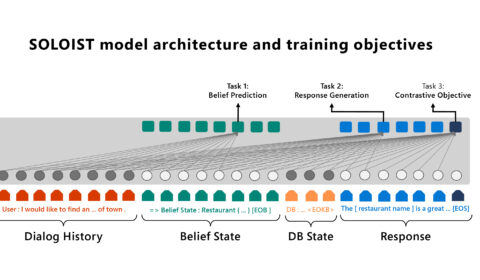

SOLOIST: Pairing transfer learning and machine teaching to advance task bots at scale

| Baolin Peng, Chunyuan Li, Jinchao Li, Lars Liden, と Jianfeng Gao

The increasing use of personal assistant…

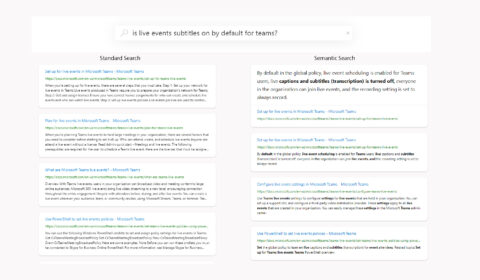

The science behind semantic search: How AI from Bing is powering Azure Cognitive Search

| Rangan Majumder, Alec Berntson, Daxin Jiang (姜大昕), Jianfeng Gao, Furu Wei, と Nan Duan

Azure Cognitive Search (opens in new tab…

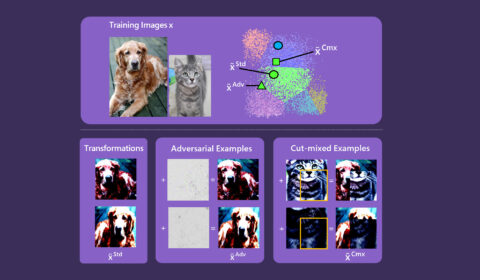

HEXA: Self-supervised pretraining with hard examples improves visual representations

| Chunyuan Li, Lei Zhang, と Jianfeng Gao

Humans perceive the world through observ…

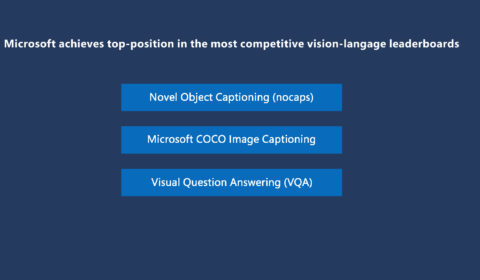

VinVL: Advancing the state of the art for vision-language models

| Pengchuan Zhang, Lei Zhang, と Jianfeng Gao

Humans understand the world by perceivin…

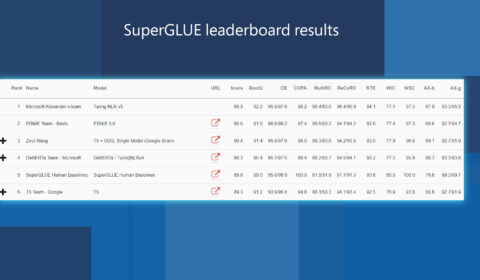

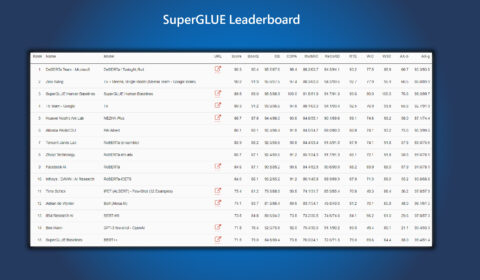

Microsoft DeBERTa surpasses human performance on the SuperGLUE benchmark

| Pengcheng He, Xiaodong Liu, Jianfeng Gao, と Weizhu Chen

Natural language understanding (NLU) is …

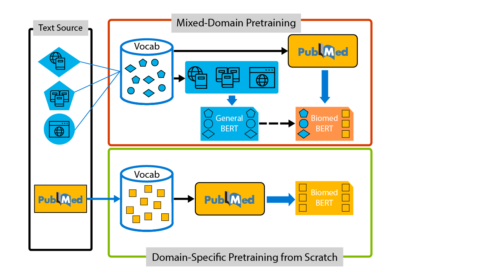

Domain-specific language model pretraining for biomedical natural language processing

| Hoifung Poon と Jianfeng Gao

COVID-19 highlights a perennial problem …