Explainable 3D Reconstruction using Deep Geometric Prior

- Mattan Serry ,

- Dov Danon ,

- Hagit Schechter ,

- Amit H. Bermano

Microsoft Journal of Applied Research | , Vol 15: pp. 110-123

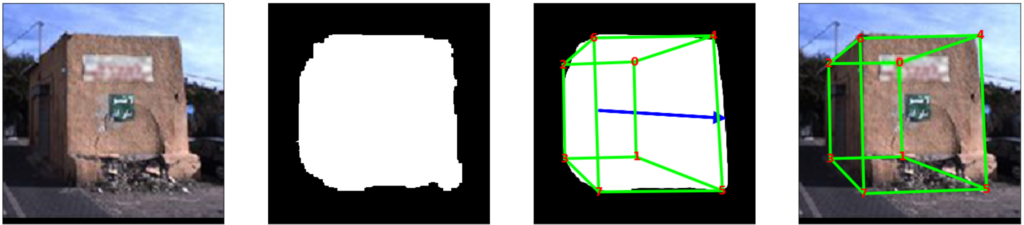

Reconstructing 3D objects from a single image is a notoriously difficult task, with many different proposed approaches and settings. In this paper, we investigate a unique variant: fitting cuboids to silhouettes. In other words, we ask how strong geometric priors can benefit texture-less binary silhouettes-based reconstruction. While more challenging, using silhouettes enables training on purely synthesized perfectly labeled data. For the investigation, we look at street-level images of buildings, since they hold rigorous geometric structure, and their silhouettes are easily obtained, for example through instance-level segmentation. Given a noisy, partially occluded, segmentation mask as input, we present a three-step network that first generates a cleaner version for the mask, then moves to a heat-map estimation of the cuboid corners, and finally extracts the actual, geometrically coherent, vertex positions. Even though jointly trained, each of these steps produces human-legible intermediate results instead of a latent code, which serve both in guiding the training process, but also in providing explainability — a pillar of modern ethical AI systems. Finally, we evaluate our approach through street level images and ablation studies.