I recently sat down with Futurum analyst Fernando Montenegro to talk about where AI agents are landing inside real organizations—not the demos, not the hype, but the messy reality of production systems, governance, and scale.

What came through clearly in that conversation is that most organizations aren’t struggling to adopt agents because the technology is unsafe. They’re struggling because their governance models were built for a world that no longer exists.

While traditional security models still depend on a clear distinction between “inside” and “outside,” and that boundary absolutely still matters. What’s changed is the pace. Agents now move fluidly across apps, data sources, and workflows often spanning environments that were designed with autonomous or semi-autonomous systems in mind.

When building an agent or app can take minutes, imposing governance models built around week-long and manual review processes quickly break down. The challenge isn’t whether to govern—it’s how. Governance has to account for blurred boundaries and apply the right oversight so teams can move fast, without losing control.

When governance strategies boil down to “lock everything down” or “we’ll figure it out later,” the outcome is predictable: either uncontrolled adoption or shadow IT with no visibility. Neither is a win.

The governance questions every AI agent should answer

The most effective organizations aren’t trying to stop agents. They’re figuring out how to classify risk clearly and apply the right controls at the right time.

If governance is just a list of things people can’t do, that’s not governance—it’s a backlog of workarounds waiting to happen. Disruptors and innovators always find a way – whether it’s inside the system or outside your line of sight. When there’s no supported path to do the right thing, shadow IT isn’t a failure of discipline, it’s the natural result. Constraints without alternatives don’t stop innovation, they just push it underground, encouraging shadow IT.

Real governance sets boundaries that let teams move fast and stay safe:

- What data sources an agent can access

- How broadly can it be deployed or shared

- What actions is it allowed to take

- What identity does it run under

- What level of oversight applies as risk increases

A low-risk personal productivity agent is not the same as an agent connected to a core business system. Treating them as if they are leads to predictable points of failure. You either over restrict everything and stall innovation, or you under-protect what actually matters, leaving critical systems exposed. Governance only works when it reflects the real differences in risk.

Risk isn’t binary—and AI governance needs a risk-based model

A practical way to make this operational is a simple risk-based model. Not theoretical. Operational.

Think in terms of graduated risk zones:

- Low risk: constrained, self-serve scenarios where people can build and use agents with tight guardrails—limited data access, limited sharing. In this scenario, makers don’t need to open a ticket for every idea, and IT doesn’t have to micromanage. Teams can move quickly, building with confidence, without friction, and IT can stay out of the critical path,

- Medium risk: broader sharing, more sensitive data, more meaningful actions. These scenarios trigger review and oversight—but without resetting momentum or forcing heavyweight governance on every idea.

- High risk: business critical workflows tied to core systems. These need deliberate control from day one. Not “nobody can build,” but “the right people build inside the right boundaries, with the right oversight.”

The point isn’t the labels. The point is clarity. Risk is contextual. Governance should be too.

Where governance actually gets enforced: the platform

Governance only works when it’s enforced inherently by the platform, not layered on through policy decks, emails, or spreadsheets.

That’s why a concept of managed platform matters: Make security, governance and operations part of the platform experience—inventory, usage insight, controlled sharing, connector governance, and lifecycle management—rather than an external process held together by best intentions.

Managed environments—our practical adoption of the managed platform concept—are a Power Platform capability, not something limited to a single product or workload. Managed environments enable teams to manage apps, automations, pages, and even agents built in Microsoft Copilot Studio

One of the cleanest controls is also one of the simplest: sharing limits paired with a clear on‑ramp.

If someone builds something for themselves or for their immediate teammates, that’s one risk profile. If they want to share their solution more broadly, that’s a different one. The platform needs to distinguish between those cases. When it does, you can let people experiment freely—and require deliberate promotion, review, and accountability when something is ready to scale.

Agents don’t create permission problems—they expose them

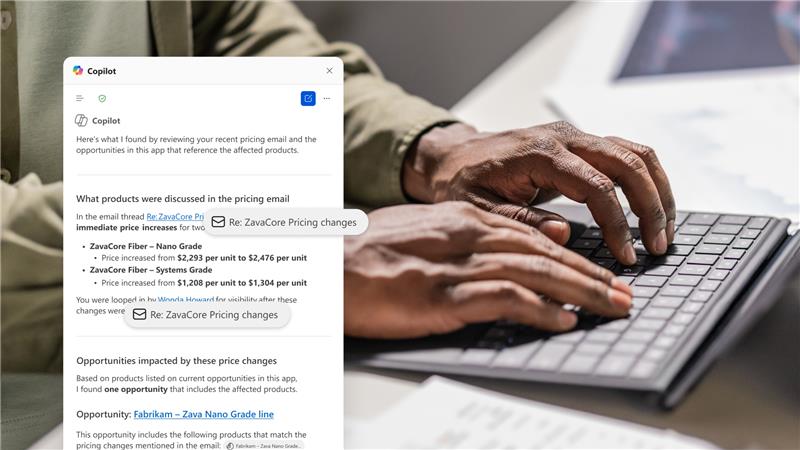

This bears repeating, because it matters: agents generally operate as the calling user. They don’t magically gain new permissions. Which means agents don’t create access problems; they expose the ones you already have, faster.

If users have overly broad permissions today, agents will too. That’s not an agent problem—it’s an identity and access discipline problem. Effective agent governance only works when it’s built on solid foundations.

Trust by design, with verification built-in

Strong proactive controls matter, but they’re not enough on their own. You still need reactive controls: monitoring, diagnostics, and audit trails, especially when agents take actions with compliance implications.

Trust but verify still applies. Looking at the familiar expense-approval analogy: humans unintentionally approve things incorrectly all the time. We manage that risk with audits, compensating controls, and limits on blast radius. Agent risk should be treated the same way: know what happened, understand why it happened, and contain the impact when it doesn’t go as planned.

The takeaway

The future isn’t “agents everywhere with no control,” and it’s not “no agents because risk.” Both fail.

The practical path is adaptive governance: classify risk clearly, enforce it through the platform, and create promotion paths so good ideas can scale without turning into tomorrow’s incident response.

That’s how organizations stop playing defense. If you want to learn how you can start saying “yes” safely, please watch the full interview with Fernando.